About

The Cornell Phonetics Lab is a group of students and faculty who are curious about speech. We study patterns in speech — in both movement and sound. We do a variety research — experiments, fieldwork, and corpus studies. We test theories and build models of the mechanisms that create patterns. Learn more about our Research. See below for information on our events and our facilities.

Upcoming Events

27th March 2024 12:20 PM

PhonDAWG - Phonetics Lab Data Analysis Working Group

We will read and discuss the following paper by Dr. Amanda Rysling (and don't forget to attend her colloquia talk on Thursday!)

Processing of linguistic focus depends on contrastive alternatives by Morwenna Hoeks, Maziar Toosarvandani, and Amanda Rysling; Journal of Memory and Language Volume 132, October 2023

Location: B11 Morrill Hall, 159 Central Avenue, Morrill Hall, Ithaca, NY 14853-4701, USA

28th March 2024 04:30 PM

Linguistics Colloquium Speaker: Amanda Rysling to speek on Linguistic Efficiency and what it takes to comprehend (a) focus

The Cornell Linguistics Department proudly presents Linguistics Colloquium Speaker Dr. Amanda Rysling. Assistant Professor at the University of California, Santa Cruz.

Dr. Rysling will give a talk titled: "A new window on linguistic efficiency: what it takes to comprehend (a) focus."

Funded in part by the GPSAFC and Open to the Graduate Community.

Abstract:

Over the past half-century, psycholinguistic studies of linguistic focus — often described only as the most important or informative material — have found that comprehenders preferentially attend to focused material and process it more "deeply" or "effortfully" than non-focused material.

But psycholinguists have investigated only a limited subset of focus constructions, and we have not come to an understanding of how costly focus is to process, what factors govern that cost, or why the language comprehension system behaves in the way that it does, and not others.

In this talk, I discuss the problem for language comprehenders presented by the category of focus, and present evidence that focus processing is generally costly, but this cost can be attenuated by the presence of contrastive alternatives to a focus in the context before that upcoming focus, as would be expected on the basis of proposals in formal semantics (see Rooth, 1992, i.m.a.).

Evidence from the processing of second-occurrence foci demonstrate that comprehenders seem to work harder than our general models of sentence processing would posit that they should have to in comprehending given focused material.

These findings add to our understanding of what it means to be good enough or efficient in language processing, delineating conditions under which comprehenders do (not) find apparently important material to be worth processing deeply or effortfully.

Bio:

Dr. Rysling is an assistant professor in the Department of Linguistics at the University of California, Santa Cruz. She is interested in how language is represented and processed; in particular, she seeks cases in which the processing of a linguistic unit is affected by the phonetic, phonological, or prosodic context in which that unit occurs.

This theme unifies her work on segmental perception, lexical bias effects, cues to prosodic structure, sonority sequencing and vowel alternations in Polish, and, more recently, semantic representation and processing.

Her name is pronounced either [rɨslɨŋ], with igryk, or [ɹislɨŋ], like the wine.

Location: 106 Morrill Hall, 159 Central Avenue, Morrill Hall, Ithaca, NY 14853-4701, USA

8th April 2024 12:20 PM

Phonetics Lab Meeting

No meeting - enjoy the eclipse!

Location: B11 Morrill Hall, 159 Central Avenue, Morrill Hall, Ithaca, NY 14853-4701, USA

10th April 2024 12:20 PM

PhonDAWG - Phonetics Lab Data Analysis Working Group

Bruce and Sam will give a tutorial on the Linux Screen utility - a tool necessary for running long-term jobs on our compute server Uvular and on the G2 cluster. When you arrive in B11, please connect to the P-lab VPN and log into Uvular.

Location: B11 Morrill Hall, 159 Central Avenue, Morrill Hall, Ithaca, NY 14853-4701, USA

Facilities

The Cornell Phonetics Laboratory (CPL) provides an integrated environment for the experimental study of speech and language, including its production, perception, and acquisition.

Located in Morrill Hall, the laboratory consists of six adjacent rooms and covers about 1,600 square feet. Its facilities include a variety of hardware and software for analyzing and editing speech, for running experiments, for synthesizing speech, and for developing and testing phonetic, phonological, and psycholinguistic models.

Web-Based Phonetics and Phonology Experiments with LabVanced

The Phonetics Lab licenses the LabVanced software for designing and conducting web-based experiments.

Labvanced has particular value for phonetics and phonology experiments because of its:

- *Flexible audio/video recording capabilities and online eye-tracking.

- *Presentation of any kind of stimuli, including audio and video

- *Highly accurate response time measurement

- *Researchers can interactively build experiments with LabVanced's graphical task builder, without having to write any code.

Students and Faculty are currently using LabVanced to design web experiments involving eye-tracking, audio recording, and perception studies.

Subjects are recruited via several online systems:

- * Prolific and Amazon Mechanical Turk - subjects for web-based experiments.

- * Sona Systems - Cornell subjects for for LabVanced experiments conducted in the Phonetics Lab's Sound Booth

Computing Resources

The Phonetics Lab maintains two Linux servers that are located in the Rhodes Hall server farm:

- Lingual - This Ubuntu Linux web server hosts the Phonetics Lab Drupal websites, along with a number of event and faculty/grad student HTML/CSS websites.

- Uvular - This Ubuntu Linux dual-processor, 24-core, two GPU server is the computational workhorse for the Phonetics lab, and is primarily used for deep-learning projects.

In addition to the Phonetics Lab servers, students can request access to additional computing resources of the Computational Linguistics lab:

- *Badjak - a Linux GPU-based compute server with eight NVIDIA GeForce RTX 2080Ti GPUs

- *Compute server #2 - a Linux GPU-based compute server with eight NVIDIA A5000 GPUs

- *Oelek - a Linux NFS storage server that supports Badjak.

These servers, in turn, are nodes in the G2 Computing Cluster, which currently consists of 195 servers (82 CPU-only servers and 113 GPU servers) consisting of ~7400 CPU cores and 698 GPUs.

The G2 Cluster uses the SLURM Workload Manager for submitting batch jobs that can run on any available server or GPU on any cluster node.

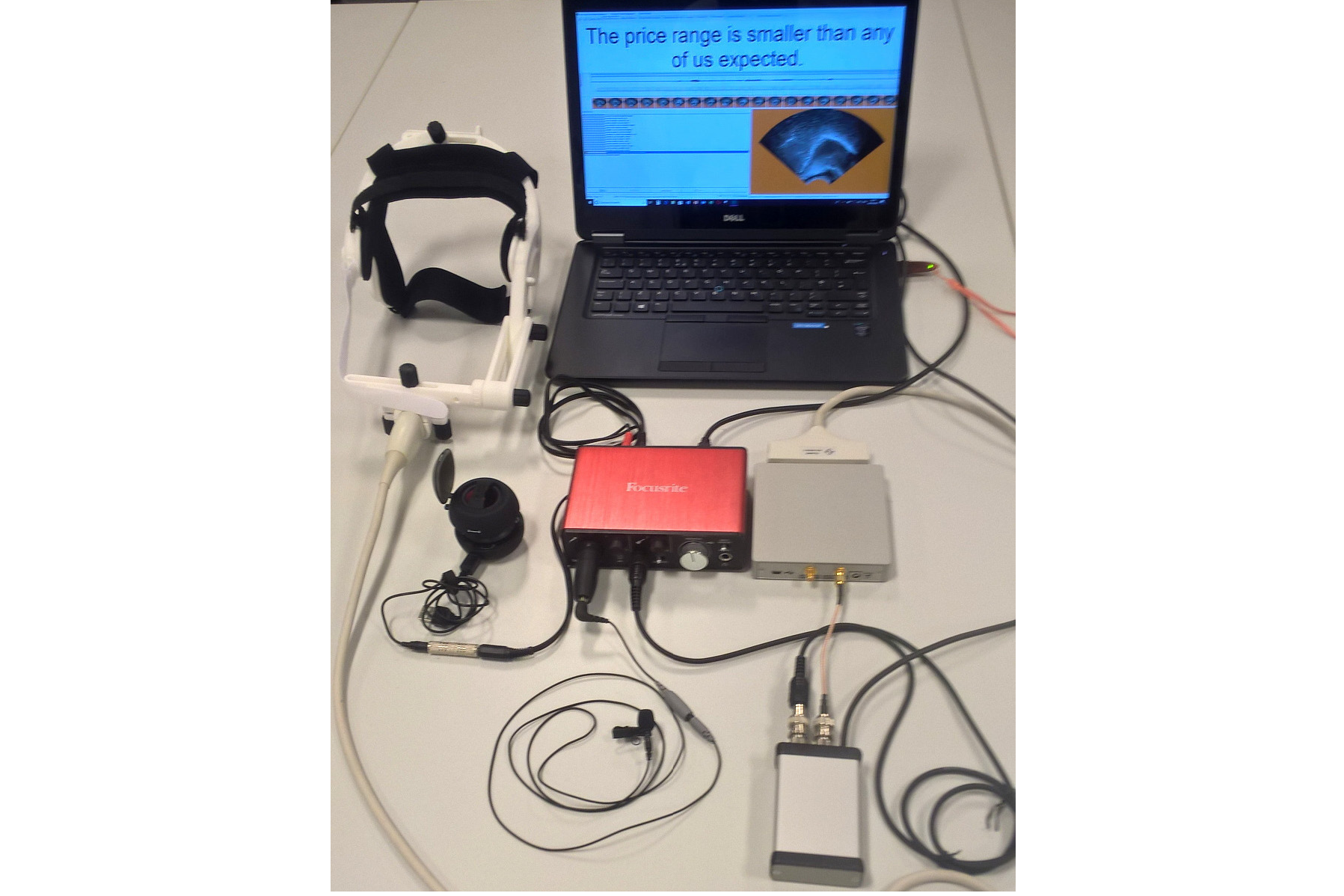

Articulate Instruments - Micro Speech Research Ultrasound System

We use this Articulate Instruments Micro Speech Research Ultrasound System to investigate how fine-grained variation in speech articulation connects to phonological structure.

The ultrasound system is portable and non-invasive, making it ideal for collecting articulatory data in the field.

BIOPAC MP-160 System

The Sound Booth Laboratory has a BIOPAC MP-160 system for physiological data collection. This system supports two BIOPAC Respiratory Effort Transducers and their associated interface modules.

Language Corpora

- The Cornell Linguistics Department has more than 915 language corpora from the Linguistic Data Consortium (LDC), consisting of high-quality text, audio, and video corpora in more than 60 languages. In addition, we receive three to four new language corpora per month under an LDC license maintained by the Cornell Library.

- This Linguistic Department web page lists all our holdings, as well as our licensed non-LDC corpora.

- These and other corpora are available to Cornell students, staff, faculty, post-docs, and visiting scholars for research in the broad area of "natural language processing", which of course includes all ongoing Phonetics Lab research activities.

- This Confluence wiki page - only available to Cornell faculty & students - outlines the corpora access procedures for faculty supervised research.

Speech Aerodynamics

Studies of the aerodynamics of speech production are conducted with our Glottal Enterprises oral and nasal airflow and pressure transducers.

Electroglottography

We use a Glottal Enterprises EG-2 electroglottograph for noninvasive measurement of vocal fold vibration.

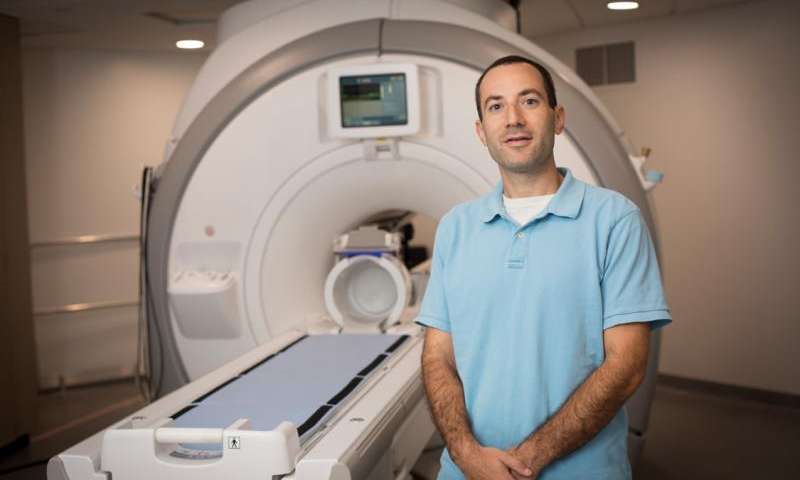

Real-time vocal tract MRI

Our lab is part of the Cornell Speech Imaging Group (SIG), a cross-disciplinary team of researchers using real-time magnetic resonance imaging to study the dynamics of speech articulation.

Articulatory movement tracking

We use the Northern Digital Inc. Wave motion-capture system to study speech articulatory patterns and motor control.

Sound Booth

Our isolated sound recording booth serves a range of purposes--from basic recording to perceptual, psycholinguistic, and ultrasonic experimentation.

We also have the necessary software and audio interfaces to perform low latency real-time auditory feedback experiments via MATLAB and Audapter.